After a United Nations commission to block killer robots was shut down in 2018, a new report from the international body now says the Terminator-like drones are now here.

An autonomous weaponized drone hunted down a human target last year and attacked them without being specifically ordered to, according to a report from the UN Security Council’s Panel of Experts on Libya, published in March 2021 that was published in the New Scientist magazine and the Star. In other words, a drone may have killed one or several people.

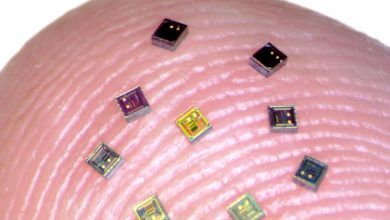

According to a recently uncovered UN report, this happened during a March 2020 incident between Libyan government forces and a military faction led by the Libyan National Army’s Khalifa Haftar. The March 2020 attack was in Libya and perpetrated by a Kargu-2 quadcopter drone produced by Turkish military tech company STM “during a conflict between Libyan government forces and a breakaway military faction led by Khalifa Haftar, commander of the Libyan National Army,” the Star reports, adding: “The Kargu-2 is fitted with an explosive charge and the drone can be directed at a target in a kamikaze attack, detonating on impact.”

The KARGU is a rotary-wing attack drone designed for asymmetric warfare or anti-terrorist operations, which according to the manufacturers “can be effectively used against static or moving targets through its indigenous and real-time image processing capabilities and machine learning algorithms embedded on the platform.” A video showcasing the drone shows it targeting mannequins in a field, before diving at them and detonating an explosive charge.

“Logistics convoys and retreating HAF were subsequently hunted down and remotely engaged by the unmanned combat aerial vehicles or the lethal autonomous weapons systems such as the STM Kargu-2 (see annex 30) and other loitering munitions,” according to the report.

The drones were operating in a “highly effective” autonomous mode that required no human controller and the report notes:

“The lethal autonomous weapons systems were programmed to attack targets without requiring data connectivity between the operator and the munition: in effect, a true ‘fire, forget and find’ capability” – suggesting the drones attacked on their own.

Zak Kallenborn, at the National Consortium for the Study of Terrorism and Responses to Terrorism in Maryland, said this could be the first time that drones have autonomously attacked humans and raised the alarm.

“How brittle is the object recognition system?” Kallenborn asked in the report. “… how often does it misidentify targets?”

Jack Watling at UK defense think tank Royal United Services Institute, told New Scientist: “This does not show that autonomous weapons would be impossible to regulate,” he says. “But it does show that the discussion continues to be urgent and important. The technology isn’t going to wait for us.”

In August of last year, Human Rights Watch warned of the need for legislation against “killer robots” while NYC mayoral candidate Andrew Yang has called for a global ban on them – something the US and Russia are against. But all talks were stalled by the opposition saying technology was not there yet. Since technology can’t allow for killer robots right now, it would feel premature to ban them, it was said.