Late last year Cupertino tech giant Apple Inc made an oft-rumored big shift for its MacBook notebook lineup when it introduced a custom, in-house designed system-on-chip (SoC) for the lineup. Dubbed as the Apple M1, this chip is fabricated on the Taiwan Semiconductor Manufacturing Company’s (TSMC) 5nm semiconductor node – one of the most advanced chipmaking techniques in the world.

Yet, even though the M1 and potential successors aim to power Apple’s desktop and notebook computers, taking a high-level look at the chip’s design and comparing it to what Tesla Inc’s HW 3.0 computer offers, the aforementioned use cases might not be the only ones that Apple has in mind for them – or might end up using them for.

Apple’s M1 SoC Lays Down Foundation To Bring Edge Computing For Autonomous Driving

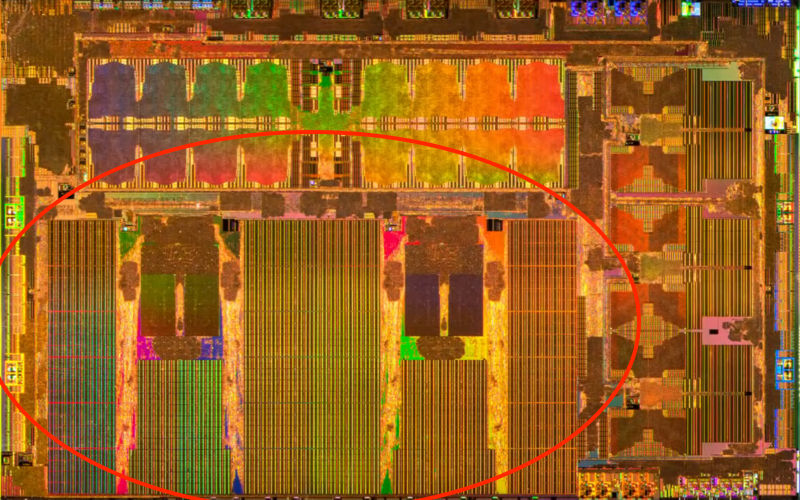

To start off, it’s worthwhile to take a look at what HW 3.0 is made of first. Tesla’s computer, which features roughly six billion transistors per chip and was launched in 2019 is built on Samsung Electronics’ 14nm manufacturing process. The HW 3.0 is responsible for running software updated to a vehicle from Tesla, in order to locally (on the vehicle) determine the state of the world around it.

The HW 3.0 is capable of roughly 74 million Tera-Ops/second (TOPS)/chip, a term that is used to determine the computing capacity of a neural engine. The data which a neural engine computes is different from what a central processing unit (CPU) computes, and is related primarily to determining the state of the world outside a Tesla. Tesla’s car computer all

o features one GPU/chip for a total of two GPUs for the entire system, giving it 1.2 TFLOPS of output for the system and 600 GFLOPS for each chip. It also operates in a lock-step redundancy mode, which results in both chips computing the data at the same time and verifying each other’s calculations as well, all the while acting as a backup in case one of them fails.

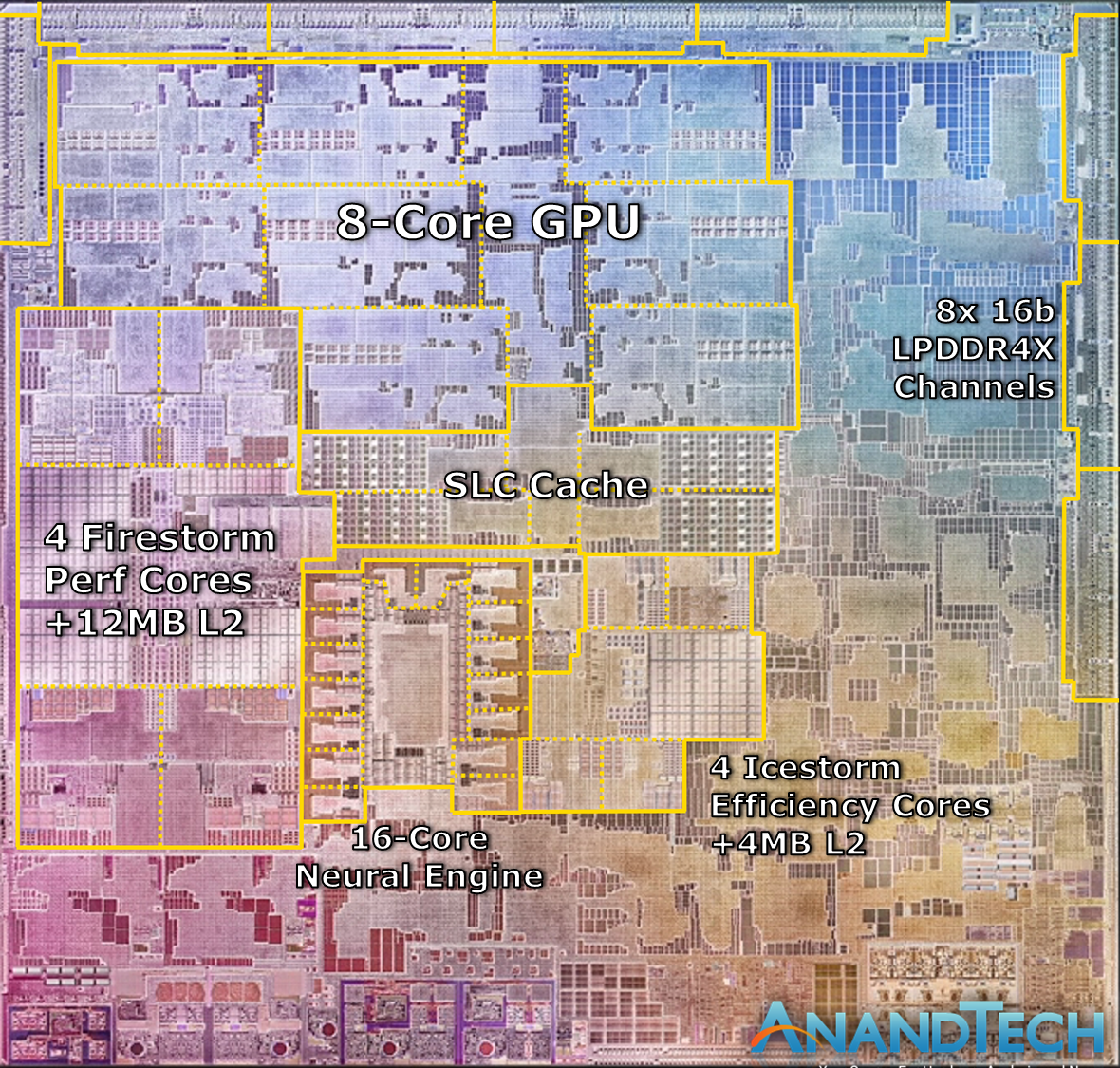

A larger area of each chip’s die is dedicated to its GPU and NPU as opposed to the CPU, which is self-explanatory due to its use-case. Apple’s M1 chip, on the other hand, has a larger area dedicated to the CPU and GPU – which is once again understandable since the main purpose of the chip is to power notebooks instead of an autonomous driving system.

Tesla’s HW 3.0 Leads The M1 For Neural Processing Due To Being Tailored To A Specific Use-Case

This octa-core powerhouse of a GPU on the M1 allows it to breeze past the HW 3.0 when comes to performance. The GPU performance of an Apple M1 is nearly double of Tesla’s hardware, as it is capable of a peak output of 2.6 TFLOPs (Trillion Floating Point Operations-Per-Second). What this means is that when Apple wants, it can design its hardware to exceed other options in the market – in an endeavor that is aided by TSMC’s 5nm process which is orders of magnitude higher in performance and power efficiency terms over the older 14nm node. The process node advantage also allows Apple to squeeze in a remarkable 16 billion transistors in a 119mm² chip for more than 3x the transistors than a chip double its size (260mm² area for the HW 3.0).

or a chip-to-chip comparison, let’s put the specifications of both the chips into a table for easy reference:

| M1: | HW 3.0 Single Chip: | |

| CPU: | 8 Cores (4×4 big.LITTLE). Max clock: 3.2GHz | 12 Cortex A72 cores. Max clock: 2.2GHz |

| GPU: | 8 Cores for 2.6 TFLOPs | Single GPU for 600 GFLOPs |

| NPU: | 16 Cores for 11 TOPs | Two 2GHz cores for ~72 TOPs |

| Transistors: | 16 billion | 6 billion |

| Manufacturing Process: | TSMC 5nm | Samsung 14nm |

What this rudimentary comparison reveals is that Apple’s Mx design has the complete potential to take on Tesla when it comes to an autonomous driving computer. Tesla’s HW 3.0 also uses a lower bit-width processing model, through which it sacrifices the quality of data input to target more variables – whereas Apple’s M1 has higher bit widths due to its use-case.

Summing it all up, through the M1 Apple has successfully created a platform that it can tweak for a future autonomous driving chip. By reducing the bit-widths and removing elements such as the CPU and GPU in favor of the NPU, the company can potentially create a platform that mirrors Tesla’s HW 3.0 chip in terms of neural computing power.

Even If It Makes A Better Chip Than Tesla, Apple Will Still Be Disadvantaged

It can then introduce this platform into the oft-rumored Project Titan (also dubbed as the Apple Car) or it can even sell this platform to other automakers. Apple’s development of an off-the-shelf autonomous driving platform has also been in the rumor mill for quite some time, but such a move also contrasts with the company’s culture of developing exclusive products within a closed-gate ecosystem.

Finally, even if Apple were to outdo Tesla and design a chip superior to the HW 3.0, it will still be unable to match the automaker’s greatest advantage in the arena. This advantage is the billions of miles of data that Tesla has access to, which allows the company to train its artificial intelligence networks. In the world of AI, training is king, and for training, having access to copious amounts of data is the single biggest advantage for any company.

At this front, Tesla’s in the lead, and with the company’s planned Dojo supercomputing platform, it hopes to significantly reduce the time needed to churn out new models. Additionally, Tesla is rumored to be working with TSMC and Samsung both for its HW 4.0 computer. The TSMC rumor suggests that the company is looking into the 7nm process node for this chip, likely due to no capacity available for the advanced 5nm node. The Samsung rumor believes that the next vehicle chip from Tesla will be built on the 5nm process node, with it being possible that Tesla is considering both options open as the 5nm node entices it due to improved performance while the 7nm offering performance and shipment reliability.